Message Limits

Explain Like I'm Five

Think of messages like cups of water from a big jug. Each day, you get a jug with a certain number of cups.

When you chat with the AI, you pour some water. A short question? Just a tiny splash. A long conversation with a big document? That's like pouring several cups at once.

The longer your chat gets, the more water each new message needs, because the AI has to remember everything you've already said.

Starting a new chat is like using a fresh cup: you only pay for what is in that request, not everything from the old conversation.

What is a "message"?

One counted message equals 16,000 tokens.

That is roughly:

- about 12,000 words

- about 24 pages of plain text

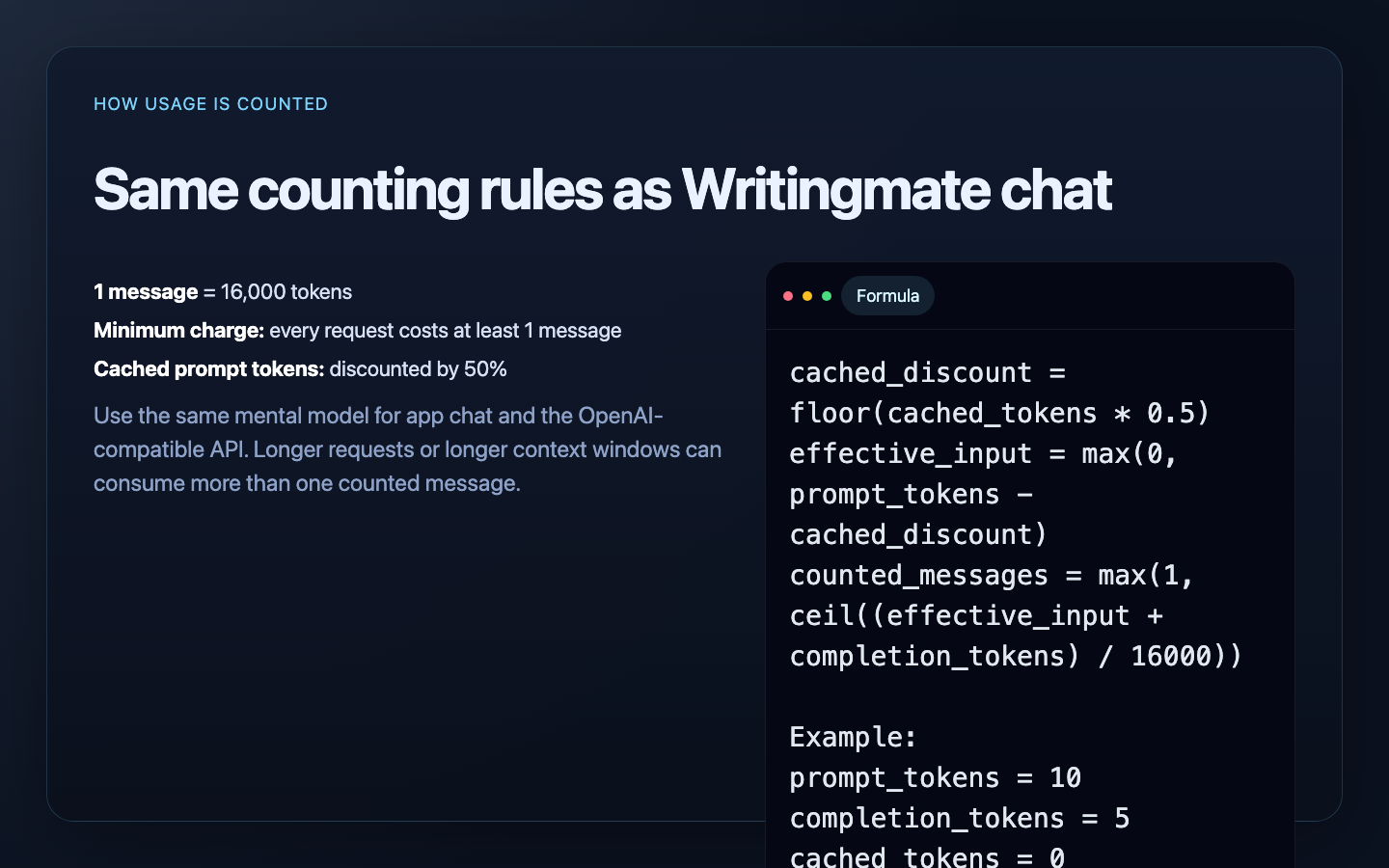

How usage is calculated

For every request, Writingmate counts:

- your prompt/input tokens

- the model’s response/output tokens

- cached prompt tokens, discounted by 50%

This applies to:

- normal Writingmate chat

- OpenAI-compatible API requests made with Writingmate Developer Keys

Exact counting formula

cached_discount = floor(cached_prompt_tokens * 0.5)

effective_input = max(0, prompt_tokens - cached_discount)

counted_messages = max(1, ceil((effective_input + completion_tokens) / 16000))

Minimum usage rule

Every interaction consumes at least 1 message, even if it is tiny.

Examples:

- less than 16,000 effective tokens = 1 message

- 25,000 effective tokens = 2 messages

- 48,000 effective tokens = 3 messages

Example

Small request

If a request returns:

prompt_tokens = 10completion_tokens = 5cached_tokens = 0

then:

effective_total = 10 + 5 = 15

counted_messages = 1

Larger request with cache discount

If a request returns:

prompt_tokens = 19000completion_tokens = 3000cached_tokens = 4000

then:

cached_discount = floor(4000 * 0.5) = 2000

effective_input = 19000 - 2000 = 17000

effective_total = 17000 + 3000 = 20000

counted_messages = ceil(20000 / 16000) = 2

The same counting rule is used in Writingmate chat and the OpenAI-compatible API.

How this affects plans

Your plan controls:

- which model categories you can access

- how many counted messages you can use each day or month

- whether AppSumo pool limits apply

Each tier comes with its own daily allowance. Basic-tier models include a generous daily allowance — much higher than Pro or Ultimate — so they're a great choice for everyday questions, drafts, and high-volume work. The allowance is the same whether you're using Writingmate chat or the OpenAI-compatible API.

Text endpoints of the OpenAI-compatible API (/chat/completions, /completions, /responses) do not use a separate quota. They use the same message counter as Writingmate chat.

Image and video billing

OpenAI-compatible image and video generation endpoints are currently disabled while usage tracking is validated. Calls to POST /images/generations, POST /videos, and GET /videos/{id} return openai_compatible_media_api_disabled.

When those endpoints are enabled, they are not counted in messages. They draw from dedicated pools:

POST /images/generationsconsumes 1 image credit per successful generation from your workspace's image pool.POST /videosconsumes the requestedsecondsvalue from your workspace's video-seconds pool and is additionally capped by your plan's monthly video count.

On AppSumo plans the image and video pools are the lifetime credits bundled with your tier. On other plans they follow the plan's per-period caps shown in Settings.

Tips for using messages efficiently

- Start new chats often when history is no longer useful

- Limit large pasted context to only the relevant sections

- Use lower-cost models for repetitive or high-volume tasks

- Watch cached-token behavior in long iterative workflows

Unlimited or BYOK behavior

If you connect your own OpenRouter API key, Writingmate can use that provider key path for supported requests. In that case, provider billing and limit behavior may differ from standard Writingmate-managed usage.